NEXT STORY

Embodied sound

RELATED STORIES

NEXT STORY

Embodied sound

RELATED STORIES

|

Views | Duration | |

|---|---|---|---|

| 111. The role of sound designer | 227 | 03:31 | |

| 112. Peculiarities of Francis Ford Coppola's style | 121 | 04:09 | |

| 113. Apocalypse Now: The General's speech scene | 116 | 02:36 | |

| 114. Harvey Keitel in Apocalypse Now | 230 | 00:54 | |

| 115. Dialogue does not sound so good in stereo | 2 | 1245 | 03:54 |

| 116. Encoded sound | 206 | 01:58 | |

| 117. Embodied sound | 157 | 04:07 | |

| 118. 'The last thing you want to do is win the Oscar' | 213 | 02:23 | |

| 119. Disney Studios reaching out | 115 | 04:41 | |

| 120. Writing the screenplay for Return to Oz | 99 | 03:03 |

And so what it is about the way the human being understands dialogue that's different from the way we understand sound effects? Because clearly there is a difference there. And I think what the difference is, is a question of what I came to call encoded sound and embodied sound. Language is an encoded sound because language is a code. These are just syllables that we can make with our lips and mouth and throat; that we have determined that cat is a sound that means an animal with a furry body and whiskers and pointy ears and a tail.

And that, in a different language, that's a different word. You have to know the language in order to break the code. So, when somebody is speaking to you, your brain is very busy. Even though you know the language very well, if you know English very well and somebody's speaking to you in English, your brain is nonetheless very busy behind the scenes cracking open this code and extracting the meaning out of it.

And under those circumstances, I think, my hypothesis is that the brain lets go of directionality in a cinematic situation. It says, 'I'm too busy to worry about that; I will believe it's coming from wherever my eyes say the person is.' So, in that case, the vision of the location of where the person is steers our belief that that sound is actually coming from there. Because otherwise we're too busy... The brain is occupied decoding it.

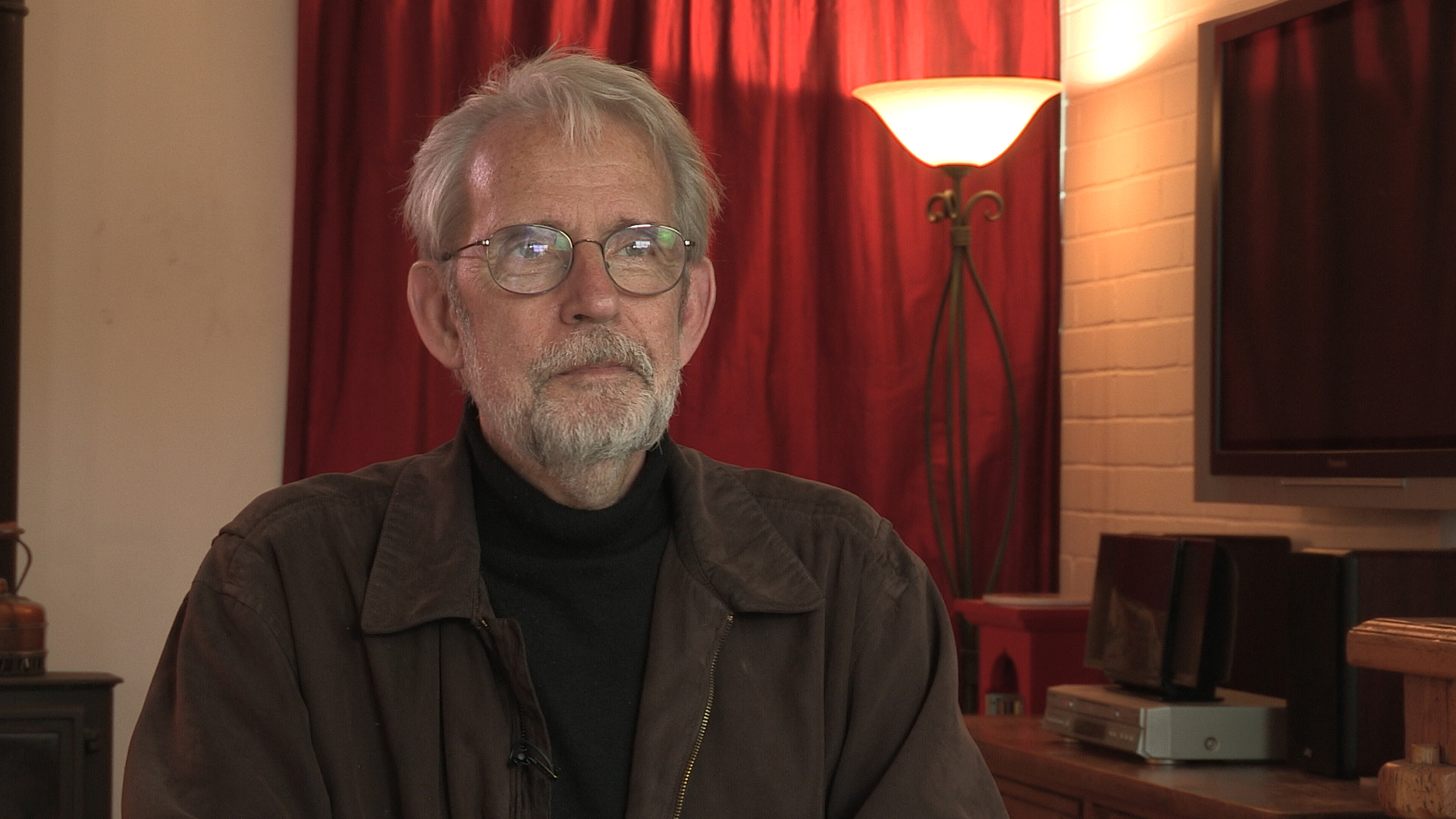

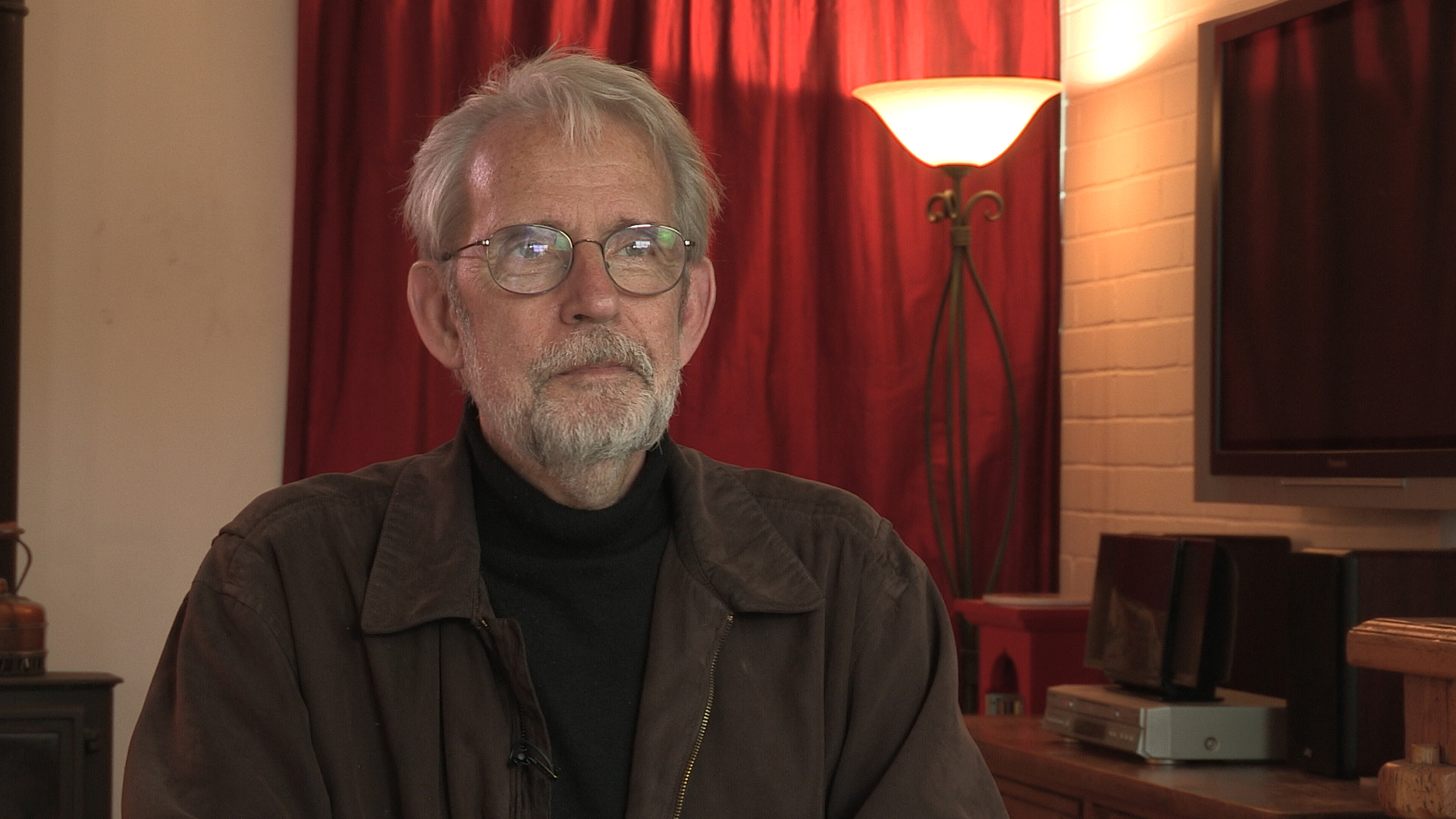

Born in 1943 in New York City, Murch graduated from the University of Southern California's School of Cinema-Television. His career stretches back to 1969 and includes work on Apocalypse Now, The Godfather I, II, and III, American Graffiti, The Conversation, and The English Patient. He has been referred to as 'the most respected film editor and sound designer in modern cinema.' In a career that spans over 40 years, Murch is perhaps best known for his collaborations with Francis Ford Coppola, beginning in 1969 with The Rain People. After working with George Lucas on THX 1138 (1971), which he co-wrote, and American Graffiti (1973), Murch returned to Coppola in 1974 for The Conversation, resulting in his first Academy Award nomination. Murch's pioneering achievements were acknowledged by Coppola in his follow-up film, the 1979 Palme d'Or winner Apocalypse Now, for which Murch was granted, in what is seen as a film-history first, the screen credit 'Sound Designer.' Murch has been nominated for nine Academy Awards and has won three, for best sound on Apocalypse Now (for which he and his collaborators devised the now-standard 5.1 sound format), and achieving an unprecedented double when he won both Best Film Editing and Best Sound for his work on The English Patient. Murch’s contributions to film reconstruction include 2001's Apocalypse Now: Redux and the 1998 re-edit of Orson Welles's Touch of Evil. He is also the director and co-writer of Return to Oz (1985). In 1995, Murch published a book on film editing, In the Blink of an Eye: A Perspective on Film Editing, in which he urges editors to prioritise emotion.

Title: Encoded sound

Listeners: Christopher Sykes

Christopher Sykes is an independent documentary producer who has made a number of films about science and scientists for BBC TV, Channel Four, and PBS.

Tags: sound, language, brain, decoding, encoding

Duration: 1 minute, 58 seconds

Date story recorded: April 2016

Date story went live: 29 March 2017